Key takeaways

- A/B testing transformed GoDaddy’s product development from opinion-based to data-driven decision-making

- Failed experiments provide valuable learning opportunities and drive innovation

- Experimentation culture promotes cross-functional collaboration and faster, more confident decision-making

(Editor’s note: This post is the first in a four part series that discusses experimentation at GoDaddy. Check back for the next part in the series.)

A/B testing wasn’t always central to product development at GoDaddy. Like many teams, we relied on customer feedback, business priorities, and our best judgment. And while that worked to some extent, we knew we could be more data-driven.

Over time, we’ve built and embraced a culture of experimentation with A/B testing being central to how we build products. At any given time, GoDaddy teams are running hundreds of experiments across all customer touchpoints. However, it’s more than just a validation tool, it serves as a guiding framework for decision-making that shapes our internal product culture. This approach allows us to act faster, with greater confidence, while remaining aligned with our customers’ needs.

What’s A/B testing?

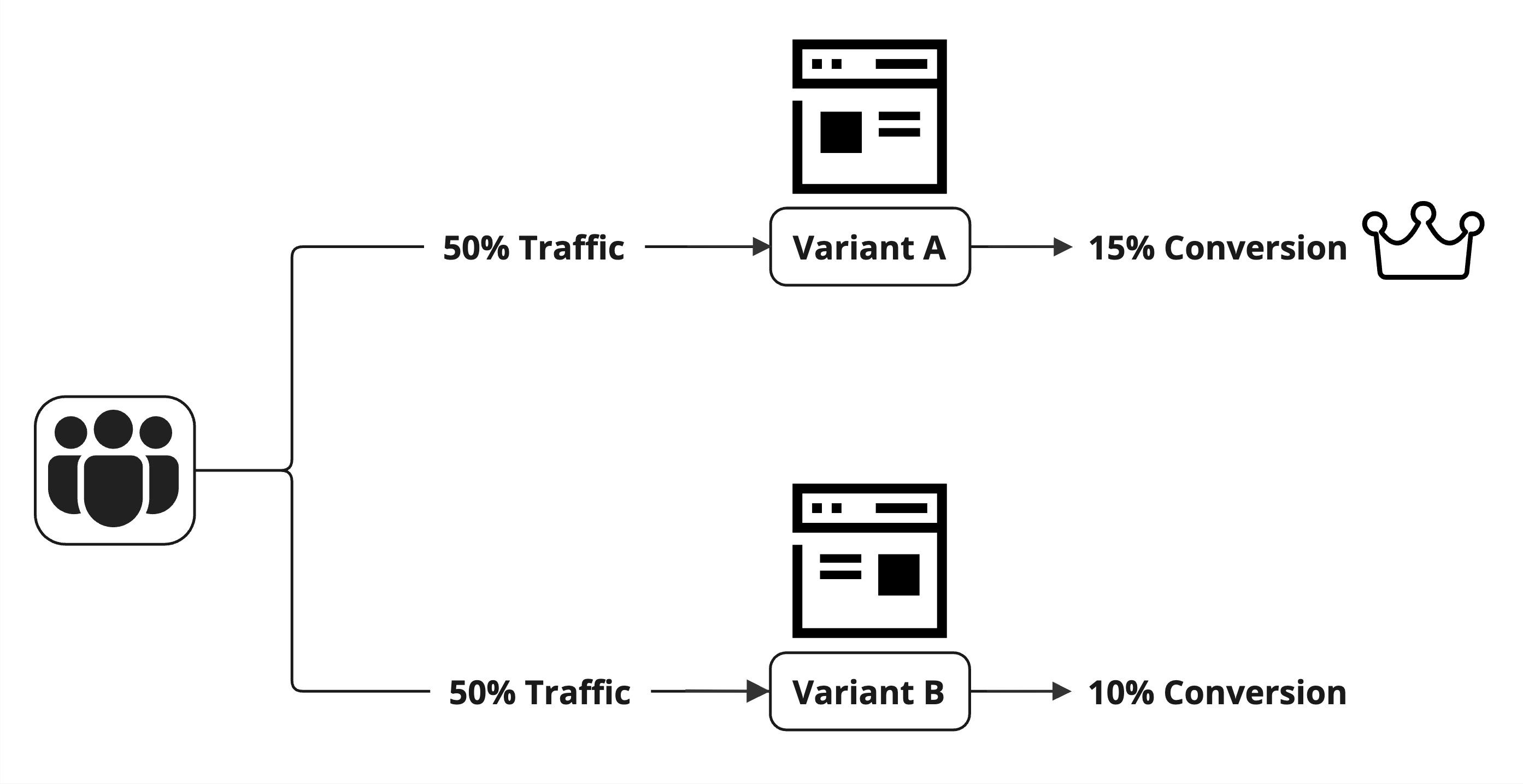

A/B testing is a method of comparing two or more versions of a product, feature, or interface to determine which one performs better based on specific metrics. In a typical A/B test, users are randomly assigned to different groups, with one group serving as the control group and others as experimental variants.

The diagram shows an overview of the A/B testing approach

This type of experiment is often referred to as a randomized controlled experimentation (RCE). The results are then analyzed using statistical methodologies to determine, with statistical significance, which version leads to better outcomes.

A/B testing is a popular RCE type, but not the only one. The following are common RCE types:

- A/B: comparing two versions (A vs. B). This is the most common type of test used for simple changes, such as a new button color or headline.

- Multi-variant (or A/B/n): this is an extension of A/B testing where you compare three or more versions to see which one resonates most with the audience.

- Multivariate: instead of testing just one element (like in A/B testing), you test combinations of multiple changes at once to identify the most effective mix.

- Champion/Challenger: where a “Challenger” variant undergoes multiple refinements before being tested against the current “Champion”.

While A/B testing and its variations rely on randomization for precise comparisons, another approach is Pre/Post testing that measures performance before and after a change without a control group. Though more susceptible to external influences like seasonality, it can still provide valuable insights when randomization isn’t possible.

Beyond guesswork

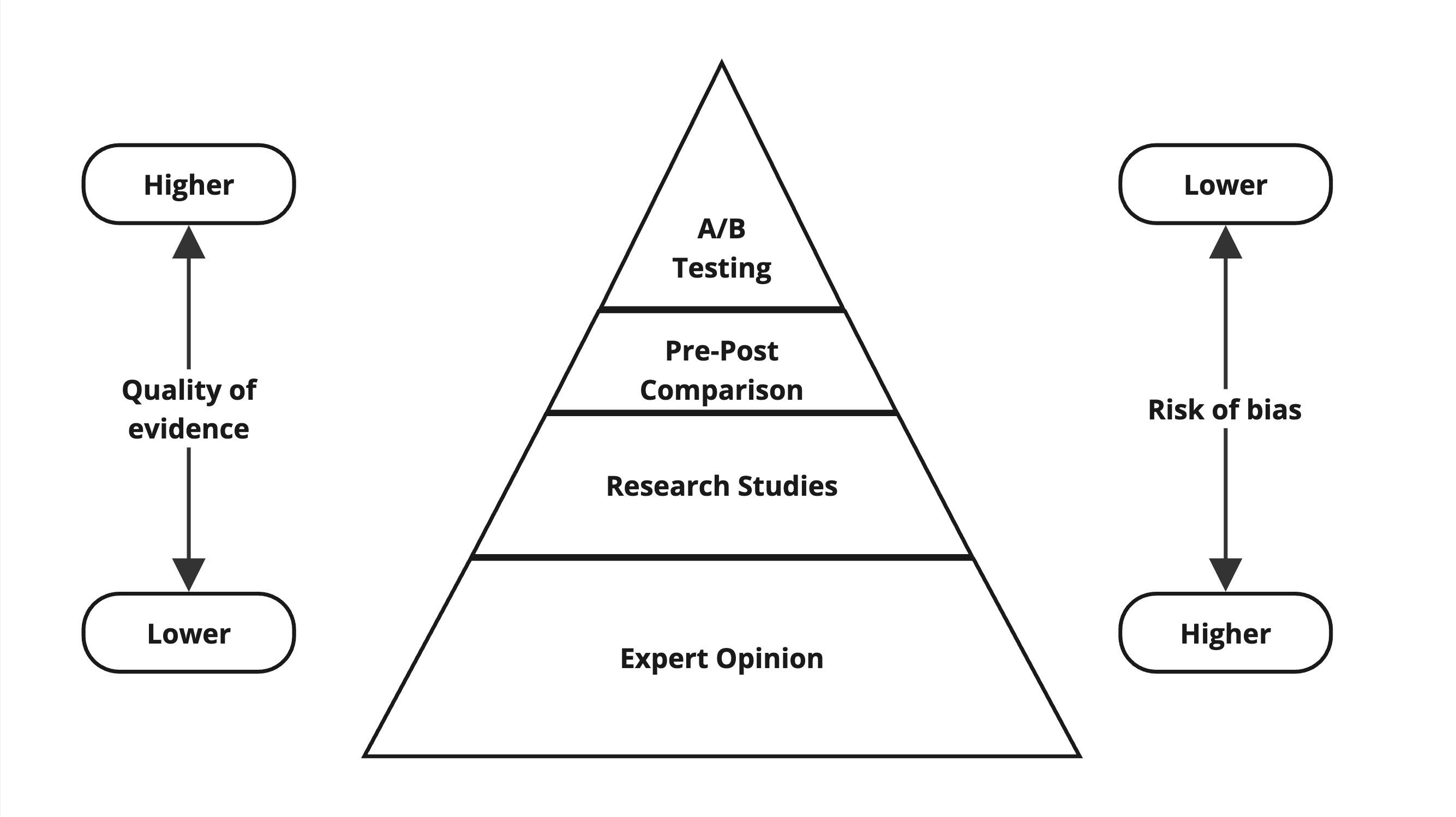

When we redesigned GoDaddy’s domain transfer flow, we assumed simplifying the interface would improve success rates. While this did happen, we soon realized that external factors like seasonality, marketing campaigns, and macro trends made it difficult to isolate the true impact of our changes. Were the results due to our redesign, or something else? The following diagram shows A/B testing on a simplified hierarchy of evidence pyramid:

That’s where A/B testing made a difference. Unlike the pre/post analysis we used before, it allowed us to directly compare two versions under the same conditions. A/B testing helped:

- give us control over external factors, so we could pinpoint exactly what was driving improvements.

- provide more confidence in our results, so teams iterated faster, making data-driven adjustments to achieve the desired outcome.

- us prioritize changes that delivered the greatest impact, ensuring we focused on the most valuable improvements first.

Shifting to data-driven experimentation empowered our product engineering teams to make decisions that not only achieved our targets, but also delivered value to our customers and stakeholders.

Maximizing learning opportunities

One of the biggest lessons we’ve learned about A/B testing is that statistically significant losses can be just as valuable as statistically significant wins.

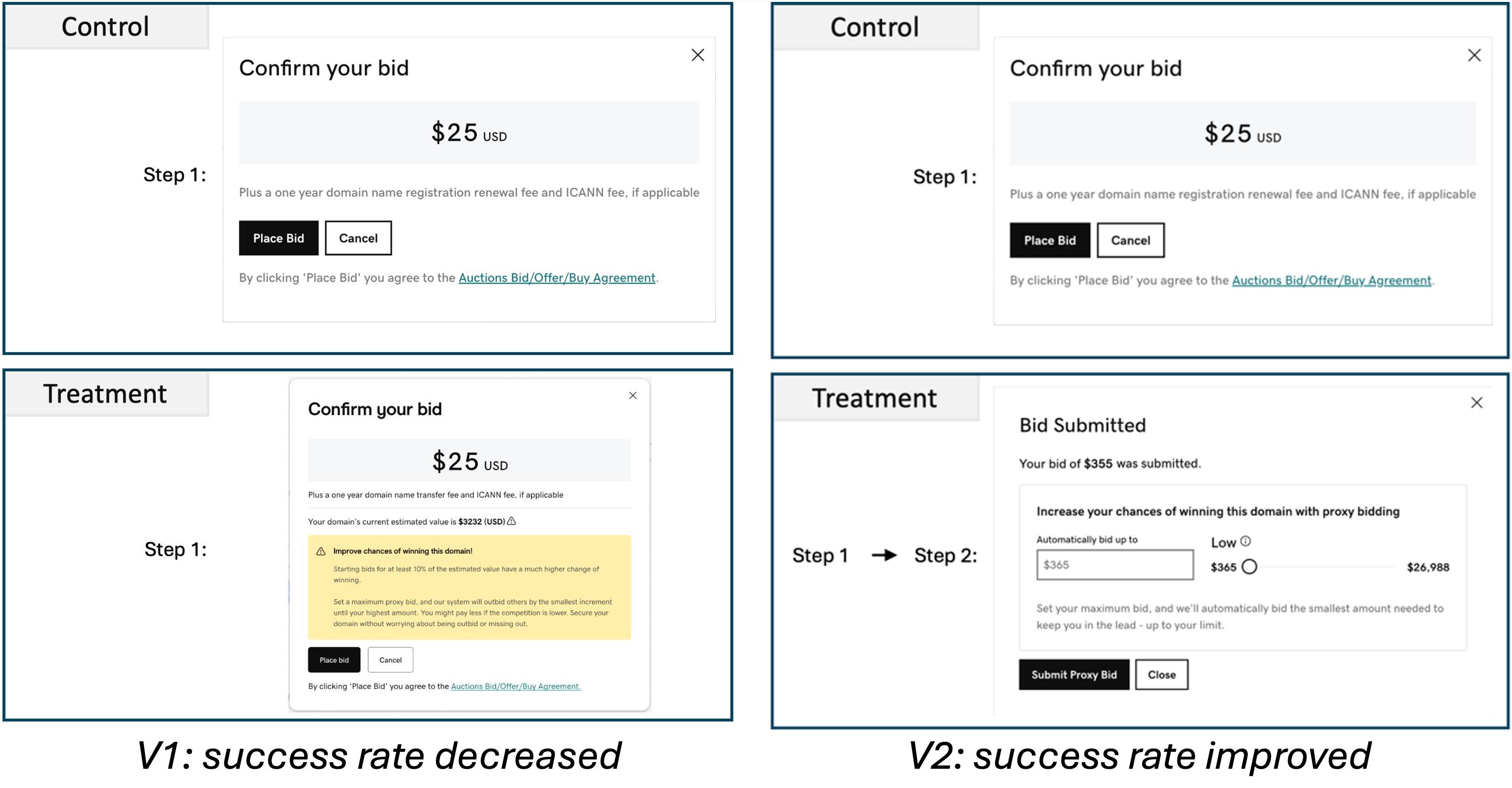

Some of our most valuable customer insights came from failed A/B experiments. For example, our first iteration of a new GoDaddy Auctions bidding flow aimed to help buyers win expired domains but had the opposite effect. Instead of a setback, the team saw it as a learning opportunity, we:

- reviewed results to understand the frictions within the flow.

- collected additional feedback from surveys and analyzed session recordings.

- brought together the team to brainstorm solutions based on the findings.

- developed a new bidding flow experience addressing the identified frictions.

- launched an improved version, leading to a winning iteration.

This collaborative, data-driven approach not only uncovered the root cause of the issue but also led to an improved iteration that strengthened customer success.

The following image shows two A/B experiment iterations:

A/B testing is a powerful way to validate ideas based on customer actions. But it’s just one piece of our customer empathy toolkit. To understand both the “what” and the “why”, GoDaddy teams are encouraged to combine A/B testing with a range of tools that offer deeper insights into customer behavior and feedback. There’s no stronger motivator to use these tools than a losing experiment. In those moments, we gain the insight to rethink, adjust, and create something better.

The Power of Velocity

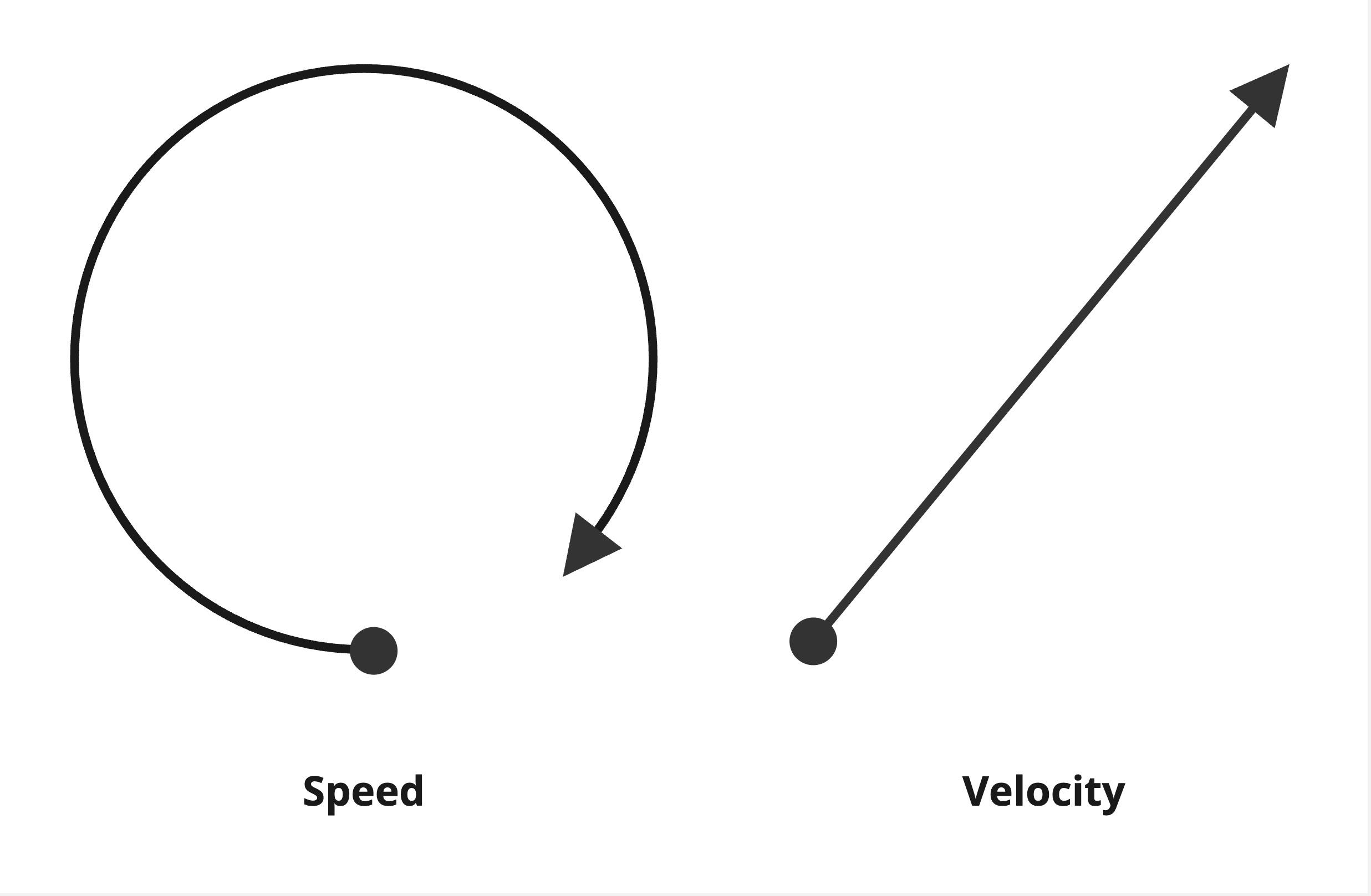

One of the biggest benefits of A/B testing is that it enables our teams to move faster with purpose. That’s meaningful progress. Speed is about how fast you go, velocity is about moving in the right direction. You can be running at full speed, but if you’re going in circles, you’re not getting anywhere. In business, progress matters more than motion.

The following image illustrates the difference between speed and velocity:

Before adopting A/B experimentation, our product cycles were slower and sometimes disconnected from long-term strategy. We’d spend months building and launching features, only to scramble afterward to analyze metrics. By the time we understood what didn’t work, it was too late to adjust. We were already focused on the next project.

But velocity isn’t just about moving quickly, it’s about learning in a way that compounds over time. As our CEO Aman noted, a healthy experimentation culture balances positive, negative, and inconclusive outcomes. If every experiment wins, we might be playing it too safe instead of pushing boundaries. The most impactful experiments don’t just move metrics, they advance our understanding of the customer and shape product strategy. A scattered, one-off experiment might drive a short-term win, but experiments aligned with product themes and clearly defined customer problems lead to stronger product experiences.

Catalyst for Collaboration

A/B testing hasn’t just helped us build better products. It transformed how teams collaborate at GoDaddy. Before we adopted a structured approach to experimentation, teams like product, design, engineering, business analytics, and customer success often worked in silos. The A/B testing process itself has naturally become a unifying force. Each experiment is a cross-functional effort from the start, with everyone involved in defining the hypothesis, designing the test, monitoring results, discussing, iterating and sharing the learnings.

GoDaddy aspires to build great products and experiences that solve real customer problems. Our gold standard are experiments that use randomization and robust statistical methods that empower teams to:

- provide confidence in decision-making (“How certain can I be of this decision?”).

- find incremental value (“What return can I expect?”).

- prove it’s causal (“Pulling this lever results in this outcome.”).

Experimentation has had a powerful impact on our company culture, helping us move away from the “HIPPO” culture—where the Highest Paid Person’s Opinion calls the shots. By focusing on evidence-based decisions, we’ve been able to speed up execution and give teams the freedom to make choices backed by data. Decisions grounded in evidence lead to better outcomes, and that’s what A/B testing is all about.

Conclusion

In the end, A/B testing isn’t just a tool, it’s a mindset that’s transformed how we build products at GoDaddy. It’s given us the confidence to move faster, learn together, and align more closely with our customers’ needs. What started as a way to validate ideas has become the backbone of our product development and encouraged us to embrace both wins and losses as opportunities for growth.

In the next article, we’ll dive deeper into how A/B testing is helping break down silos and bring teams together at GoDaddy. Stay tuned!